Blog

Writing on LLMs, inference optimization, research, and ideas.

Posts

INT4, INT8, GPTQ, AWQ, QLoRA — a practical breakdown of the quantization methods reshaping how we deploy LLMs, plus the story behind building an interactive gallery to explore them.

Apr 2026 · 8 min read · LLMs · Quantization · Systems

How Google compresses the KV cache by 6× with zero accuracy loss — random rotation, per-element quantization, and QJL residual correction explained. Presented at ICLR 2026.

2026 · 10 min read · Quantization · KV Cache · LLM Inference

Program synthesis, test-time training, fluid intelligence — what makes ARC-AGI the hardest benchmark in AI, and my early experiments competing in it with a random agent baseline.

2026 · 7 min read · AGI · Reasoning · Benchmarks

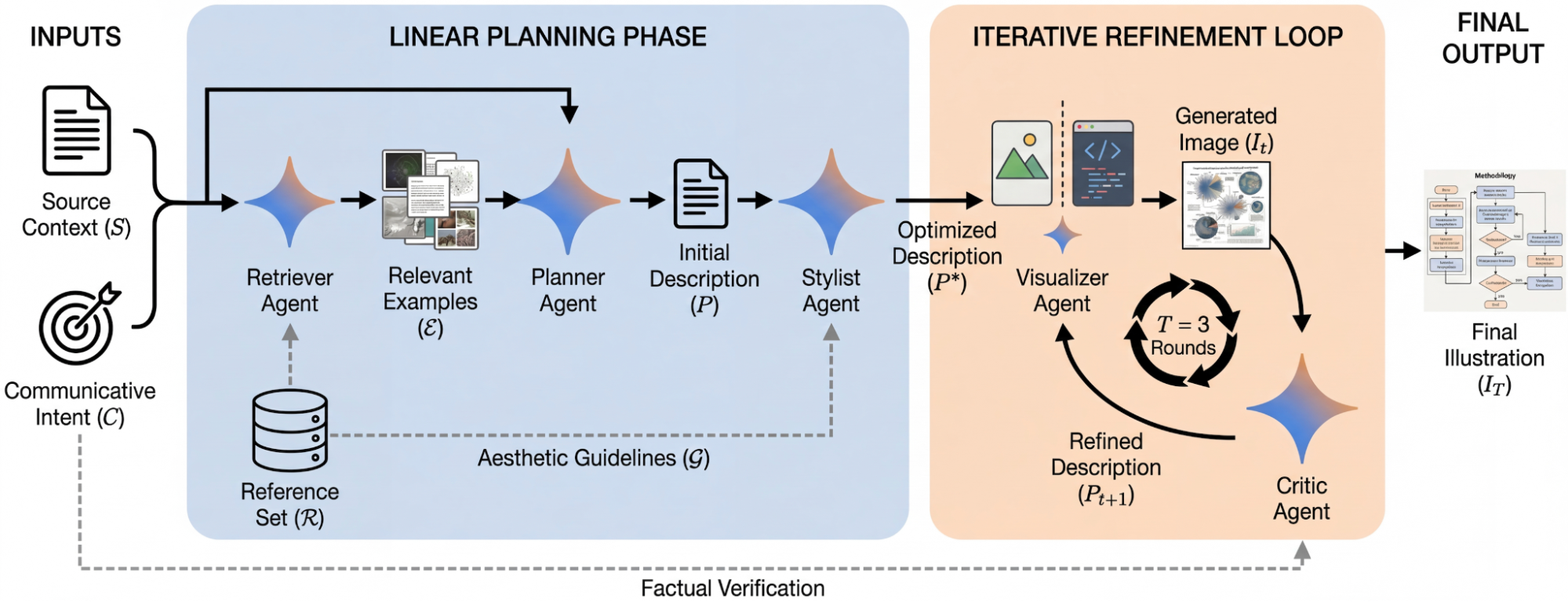

Google's two new research agents — one for automated figure generation, one for AI-assisted peer review. What they get right, what they miss, and why novelty evaluation is still a hard problem.

2026 · 8 min read · AI Tools · Research · Agents

More posts coming soon. Follow @Asg_Wolverine for updates.